AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

Back to Blog

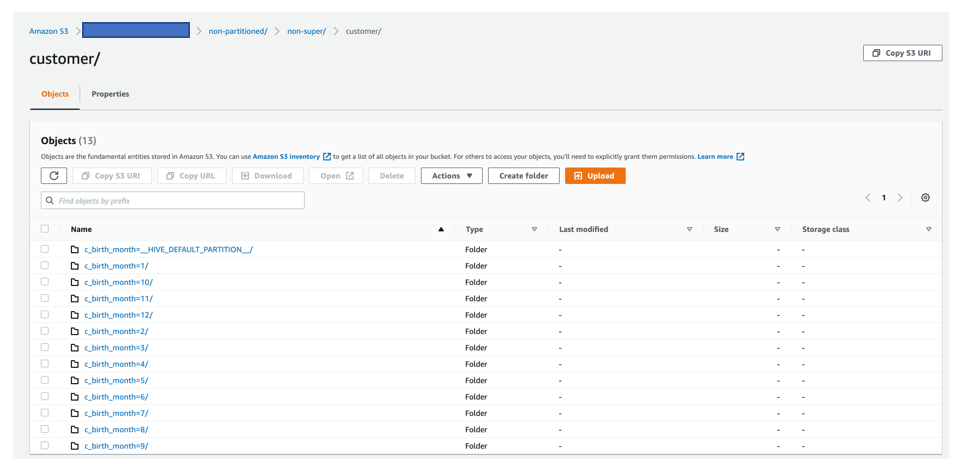

Redshift unload set bucket owner12/21/2023

Using the -steps argument it's possible to skip some parts of the transfer, or resume a failed transfer. BigQuery table IDīy default the BigQuery table ID will be the same as the Redshift table name, but the optional argument -bq-table can be used to tell BigShift to use another table ID. Redshift schemaīy default the schema in Redshift is called public, but in case you're not using that one, you can use the argument -rs-schema to specify the schema your table is in. If you don't want to put the data dumped from Redshift directly into the root of the S3 bucket you can use the -s3-prefix to provide a prefix to where the dumps should be placed.īecause of how GCS' Transfer Service works the transferred files will have exactly the same keys in the destination bucket, this cannot be configured. Host. port: 5439 username: my_redshift_user password: dGhpc2lzYWxzb2Jhc2U2NAo S3 prefix The -rs-credentials argument must be a path to a JSON or YAML file that contains the host and port of the Redshift cluster, as well as the username and password required to connect. The credentials are also used in the UNLOAD command sent to Redshift, and with the AWS SDK to work with the objects on S3. The nature of GCS Transfer Service means that these credentials are sent to and stored in GCP. It is strongly recommended that you create a specific IAM user with minimal permissions for use with BigShift. If you set THE-NAME-OF-THE-BUCKET to the same value as -s3-bucket and THE/PREFIX to the same value as -s3-prefix you're limiting the damage that BigShift can do, and unless you store something else at that location there is very little damage to be done. "arn:aws:s3:::THE-NAME-OF-THE-BUCKET/THE/PREFIX/* " You can also use the optional -aws-credentials argument to point to a JSON or YAML file that contains access_key_id and secret_access_key, and optionally region. You can't use temporary credentials, like instance role credentials, unfortunately, because GCS Transfer Service doesn't support session tokens. See the AWS SDK documentation for more information. You can provide AWS credentials the same way that you can for the AWS SDK, that is with environment variables and files in specific locations in the file system, etc. If you haven't used Storage Transfer Service with your destination bucket before it might not have the right permissions setup, see below under Troubleshooting for more information. Please note the service account will need to have the cloud-platform authorization scope as detailed in the Storage Transfer Service documentation. If Bigshift is run directly on Compute Engine, Kubernetes Engine or App Engine flexible environment, the embedded service account will be used instead. See the GCP documentation for more information. The best way to obtain this is to create a new service account and choose JSON as the key type when prompted. These must be a path to a JSON file that contains a public/private key pair for a GCP user. You can provide GCP credentials either with the environment variable GOOGLE_APPLICATION_CREDENTIALS or with the -gcp-credentials argument. All except -s3-prefix, -rs-schema, -bq-table, -max-bad-records, -steps and -compress are required. Running bigshift without any arguments, or with -help will show the options. In these cases you need to either uncompress the files manually on the GCP side (for example by running BigShift with just -steps unload,transfer to get the dumps to GCS), or dump and transfer uncompressed files (with -no-compression), at a higher bandwidth cost. However, depending on your setup and data the individual files produced by Redshift might become larger than BigQuery's compressed file size limit of 4 GiB. There are also storage charges for the Redshift dumps on S3 and GCS, but since they are kept only until the BigQuery table has been loaded those should be negligible.īigShift tells Redshift to compress the dumps by default, even if that means that the BigQuery load will be slower, in order to minimize the transfer cost. AWS charges for outgoing traffic from S3. Please note that transferring large amounts of data between AWS and GCP is not free.

Look at the tests, and how the bigshift tool is built to figure out how.īecause a transfer can take a long time, it's highly recommended that you run the command in screen or tmux or using some other mechanism that ensures that the process isn't killed prematurely. The main interface to BigShift is the bigshift command line tool.īigShift can also be used as a library in a Ruby application. On the GCP side you need a Cloud Storage bucket, a BigQuery dataset and credentials that allows reading and writing to the bucket, and create BigQuery tables. On the AWS side you need a Redshift cluster and an S3 bucket, and credentials that let you read from Redshift, and read and write to the S3 bucket (it doesn't have to be to the whole bucket, a prefix works fine).

0 Comments

Read More

Leave a Reply. |

RSS Feed

RSS Feed